A friend of mine in Kansas (you know, the one who won’t go to a water park,) wanted to one-up me on the frost pics, which is fine, since mine were extremely limited. We’ve always had this minor competition going on since he got into nature photography, a nice motivation to keep improving as long as your ego can weather it – it’s disturbing how many photographers I’ve come across who can’t handle that, believe it or not.

The image above is a little eye-bending because the line between ice and open water appears to be the edge of the water itself, with the bank and its own reflection being a curiously symmetrical rock face. It’s also easy to get the impression that the reflected sun has melted the ice, but I’m more inclined to think instead that the open water has better flow and never got the chance to freeze. The juxtaposition of blue ice and yellow sunlight is also cool. The shale, however, prompted me to research the geology of his area, and it turns out that it might be an excellent place to find fossils. 300 million years ago, that area was the edge of a tropical sea, one coast of the Pangaean supercontinent, and went through repeated depositions as the sea’s edge fluctuated over the centuries. Shale is remarkably easy to search for fossils within, so I’m presently trying to get him out there to look.

But let’s get to the frost pics. This time of year is virtually monochromatic in the mid-latitudes, primarily greys and browns, so a splash of color is exploited for everything it’s worth. This type of crystal formation is commonly called hoar frost, specifically air hoar, spiky crystals that sprout from surfaces when the surfaces drop below the humid air temperature.

Sometimes, the surface is as fine as a spider web, which are the best conditions to watch for because a frost-covered orb web is a great photo subject, as you might imagine. I have yet to find all those conditions in place myself – orb webs are often long gone by the time the frost conditions roll in – and to the best of my knowledge Jim hasn’t found them either.

Sometimes, the surface is as fine as a spider web, which are the best conditions to watch for because a frost-covered orb web is a great photo subject, as you might imagine. I have yet to find all those conditions in place myself – orb webs are often long gone by the time the frost conditions roll in – and to the best of my knowledge Jim hasn’t found them either.

As mentioned earlier, these conditions can be a little tricky. Direct sunlight will eradicate the frost in a hurry, so one either works very quickly or before the sunlight is present. The latter means much lower light levels, slowing the shutter speed, and of course much bluer light. This last bit is okay – we associate blue with cold, so it reinforces the conditions to the viewer, and overriding the white balance to keep this color in place can be quite effective. Normally you might use a white balance setting for open shade or even overcast in this kind of light (if you simply didn’t use auto white balance) – those conditions suffer from reduced yellow and red light, so these are increased in-camera to compensate and not leave the image looking too blue. So to keep the blue, you could use the setting for direct sunlight – this is pretty much white light and the camera makes no compensation. To really enhance it, go for the setting used for incandescent light; such light is very yellow, so the camera compensates by increasing the complementary color, which is of course blue. Experiment freely – the difference can be remarkable, and very expressive.

Which should make the conditions of this next image very obvious – I’m guessing that Jim was working just as the sun broke through, because there’s still frost visible and I imagine it didn’t last long. Either that or it was freakin’ cold. As indicated in the previous post, these are higher contrast conditions – note the bright highlights and distinctive shadows, giving some enhancement to the shape of this seed pod. It also made the bare branches in the background stand out a bit sharper, slightly distracting – much more and it would be working against the image too strongly. Ideally, this is where you try to find a dark background, like a patch of shade, to position behind the seed pod, using that contrast to really make it stand out, but such things can be hard to accomplish. Here’s a sneaky little trick, if you’re prepared: put the camera on a tripod, using a remote shutter release if necessary, and use your own shadow to provide the darker background.

Which should make the conditions of this next image very obvious – I’m guessing that Jim was working just as the sun broke through, because there’s still frost visible and I imagine it didn’t last long. Either that or it was freakin’ cold. As indicated in the previous post, these are higher contrast conditions – note the bright highlights and distinctive shadows, giving some enhancement to the shape of this seed pod. It also made the bare branches in the background stand out a bit sharper, slightly distracting – much more and it would be working against the image too strongly. Ideally, this is where you try to find a dark background, like a patch of shade, to position behind the seed pod, using that contrast to really make it stand out, but such things can be hard to accomplish. Here’s a sneaky little trick, if you’re prepared: put the camera on a tripod, using a remote shutter release if necessary, and use your own shadow to provide the darker background.

It may seem nitpicky – there’s a certain number of people who never noticed the lines in the background until I mentioned them. If I have to point them out, are they really distracting? Yet, there’s a difference between conscious awareness of such details, and the subconscious effect of them. Nearly everyone is able to tell that this was taken in bright sunlight, but many cannot specifically tell why – their minds see the light qualities and automatically say “sunlight” without necessarily saying, “because yellow light, and higher contrast.” When it comes to distractions, there’s usually a certain number of them in any image, details that we might not include if we were painting the image, for instance. The goal is to keep these to a minimum, making the image have a stronger impression. While those lines aren’t anything bad, not having them is better.

[This how I get back at Jim for some of his insect shots with the MP E-65mm macro lens. I never said it didn’t get petty… ;-) ]

This last one is curious. I’d suspected he was shooting in infra-red, but he told me it’s simply greyscale (the conditions were “pretty close to monochrome anyways”.) Nonetheless, there’s definitely a hint of color in there – look at the centers of the flowers – and it’s an RGB image when checked in an editing program. This was taken with a newer Canon EOS M body, and so I’m now guessing it was shot monochrome in the camera, rather than converted afterwards, and it’s not quite perfectly neutral. It also makes the file larger than it needs to be, because each color channel is simply mimicking two others (more or less, anyway.) I always shy away from in-camera effects, preferring the greater control from editing programs, and I think Jim does too, and was only trying it out to see how it fared.

So now, with all the talk about backgrounds, did you notice the little arms flanking the flowers, almost ‘holding them up’? It’s just another subtlety that enhances the subject, great when you can accomplish it.

First and foremost, and something I teach my students right off the bat, is that photographs by nature have increased contrast over what we see through our eyes. They have a narrow dynamic range, a term that straddles the border between explanatory and pompous. We all know, for instance, that dogs can hear higher pitches than we can, and perhaps you know that we only see a narrow spectrum of light, unable to discern infra-red and ultra-violet ourselves, much less gamma rays or microwaves. Well, the camera’s much worse than we are, partially because of sensitivity, but mostly because of the limitations of the medium. You can aim right at the sun and get a shot (not recommended actually,) but printed on paper or glowing from a monitor, it will never make anyone look away in tears – it will simply be white. The image has to dump a lot of brightness levels just to work.

First and foremost, and something I teach my students right off the bat, is that photographs by nature have increased contrast over what we see through our eyes. They have a narrow dynamic range, a term that straddles the border between explanatory and pompous. We all know, for instance, that dogs can hear higher pitches than we can, and perhaps you know that we only see a narrow spectrum of light, unable to discern infra-red and ultra-violet ourselves, much less gamma rays or microwaves. Well, the camera’s much worse than we are, partially because of sensitivity, but mostly because of the limitations of the medium. You can aim right at the sun and get a shot (not recommended actually,) but printed on paper or glowing from a monitor, it will never make anyone look away in tears – it will simply be white. The image has to dump a lot of brightness levels just to work.

I thought to check on my green lynx spiders, who had weathered the chill with aplomb (go ahead, picture a spider with aplomb.) This is the first time I never saw mama – she had been looking so decrepit that I was always surprised to find her still around, up until now – but the younguns ventured out as soon as the sun warmed their little chitins. Leaves blown by the wind into the protective cluster of weblines around the former egg sac get incorporated into the shelter, tacked down (I think entirely by accident) with the draglines left by the spiderlings swarming all over them. Still, it’s not much of a shelter when the temperatures drop this far, and I’m impressed with the spiders’ ability to endure the sub-freezing conditions and bounce right back with a little sunlight. There’s fewer of them now, at least some having dispersed by ballooning, but the other two hatchings that I’d observed have vanished almost entirely, so this one has been curiously stable. I plan to keep an eye on it and see what happens – I’m pretty sure these are the offspring of the hatching I observed last year, so they have to do something for the winter.

I thought to check on my green lynx spiders, who had weathered the chill with aplomb (go ahead, picture a spider with aplomb.) This is the first time I never saw mama – she had been looking so decrepit that I was always surprised to find her still around, up until now – but the younguns ventured out as soon as the sun warmed their little chitins. Leaves blown by the wind into the protective cluster of weblines around the former egg sac get incorporated into the shelter, tacked down (I think entirely by accident) with the draglines left by the spiderlings swarming all over them. Still, it’s not much of a shelter when the temperatures drop this far, and I’m impressed with the spiders’ ability to endure the sub-freezing conditions and bounce right back with a little sunlight. There’s fewer of them now, at least some having dispersed by ballooning, but the other two hatchings that I’d observed have vanished almost entirely, so this one has been curiously stable. I plan to keep an eye on it and see what happens – I’m pretty sure these are the offspring of the hatching I observed last year, so they have to do something for the winter.

After a frantic ride of perhaps 10mm, the smaller one was eventually dislodged and tumbled to a stop, miraculously unharmed, as the larger snail thundered away blithely. I waited for a short while to get some pics of the smaller one emerging and toddling off, if only to ensure that it was not limping (picture that if you will.) If you look closely at this image, you can just see the eyestalks emerging to the far right, but after its traumatic ride the snail was understandably cautious and taking its own sweet time about it, which I will leave you to imagine. The sun was bright today and this isn’t ideal conditions for snails, so after a quick spritz of water, I soon returned them all to the shelter of the rocks whence they came, where I’m sure the stories will be traded this evening over long draughts of whatever it is that snails quaff.

After a frantic ride of perhaps 10mm, the smaller one was eventually dislodged and tumbled to a stop, miraculously unharmed, as the larger snail thundered away blithely. I waited for a short while to get some pics of the smaller one emerging and toddling off, if only to ensure that it was not limping (picture that if you will.) If you look closely at this image, you can just see the eyestalks emerging to the far right, but after its traumatic ride the snail was understandably cautious and taking its own sweet time about it, which I will leave you to imagine. The sun was bright today and this isn’t ideal conditions for snails, so after a quick spritz of water, I soon returned them all to the shelter of the rocks whence they came, where I’m sure the stories will be traded this evening over long draughts of whatever it is that snails quaff.

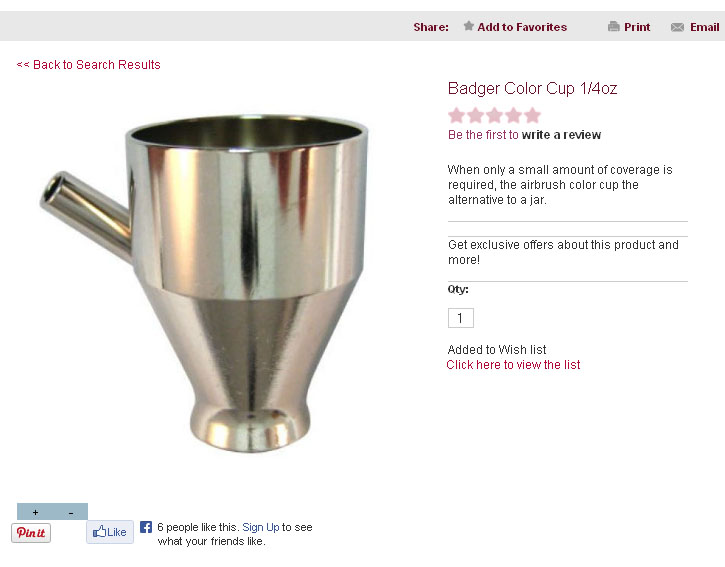

The followup: I found one on eBay, would have been just over five bucks with shipping if I didn’t get into a stupid bidding war. The item is worth about two bucks, and six is the maximum I would pay for the damn thing (which, as I would come to find out, is less than half what Michaels wanted for it.) So while waiting for the bid close date to come along, I just made my own, from a brass pipe I had and a plastic cap. Works perfectly.

The followup: I found one on eBay, would have been just over five bucks with shipping if I didn’t get into a stupid bidding war. The item is worth about two bucks, and six is the maximum I would pay for the damn thing (which, as I would come to find out, is less than half what Michaels wanted for it.) So while waiting for the bid close date to come along, I just made my own, from a brass pipe I had and a plastic cap. Works perfectly.